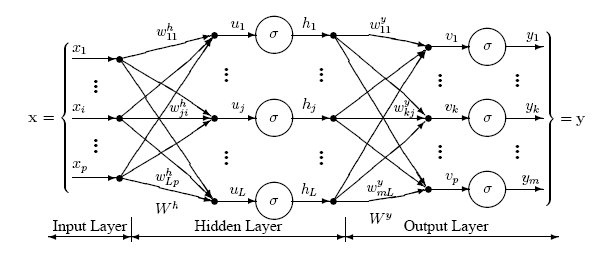

Multilayer Perceptrons – MLPs – are a class of feedforward artificial neural network with at least three layers of nodes.

Often referred to as “vanilla” neural networks, MLPs use a nonlinear activation function to recognize patters. MLPs need to be trained on sample data in order to discern patterns to be recognized in test data. They rely upon a supervised learning technique called backpropagation.

Read more on Wikipedia.

« Back to Glossary Index